Cache memory is a small, high-speed storage located close to the CPU, designed to provide quick access to frequently used data and instructions. Acting as a bridge between the fast CPU and slower main memory, cache memory significantly boosts a computer's performance by reducing the time the CPU needs to wait for data.

There are typically three levels of cache memory: L1, L2, and L3. L1 cache is the smallest and fastest, embedded directly within the CPU chip, providing the quickest access to critical data. L2 cache is larger and slightly slower, often located on the same chip or a separate chip near the CPU. L3 cache is even larger and slower compared to L1 and L2 but is still faster than the main memory. It is usually shared among multiple CPU cores.

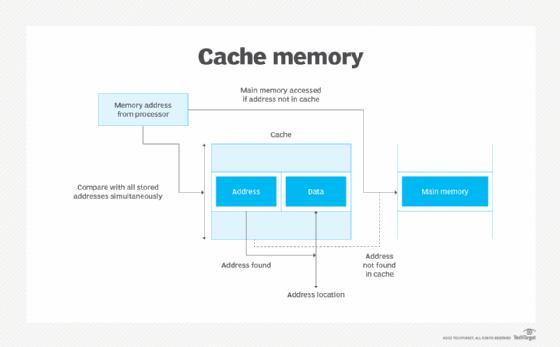

Cache memory operates on the principle of temporal and spatial locality. Temporal locality refers to the reuse of specific data within relatively short time intervals, while spatial locality refers to accessing data locations that are close to each other. By storing copies of frequently accessed data, cache memory minimizes the need to fetch data from the slower main memory.

The effectiveness of cache memory is measured by its hit rate, the percentage of memory accesses found in the cache. A high hit rate means the CPU spends less time waiting for data, leading to faster program execution. Modern processors employ sophisticated algorithms to manage cache content, deciding which data to keep and which to replace, ensuring optimal performance.

In summary, cache memory is a crucial component in modern computing, enhancing performance by providing rapid access to frequently used data. Its hierarchical structure, exploiting temporal and spatial locality, and advanced management algorithms make it indispensable for efficient CPU operations.