Main memory, alternatively RAM (Random Access Memory), is a cache that is following. Though slower, it is significantly larger than both cache and registers. It acts as an expanded storage space for the current data and active processes. Ram has a quick reading and writing speed than the secondary memory because it is volatile meaning it loses stored information when power goes off.

Secondary storage implies disks (e.g., hard disk drives (HDDs) or solid-state drives (SSDs)) such as HDDs and SSDs. These devices are non-volatile; they retain data even after power has been switched off while costing less per bit of information saved compared to RAM in terms of capacity. They are however much slower which makes them not very useful for direct data retrieval by the CPU during active tasks. Secondary storage is best suited to long-term storage for operating systems, applications, and large files.

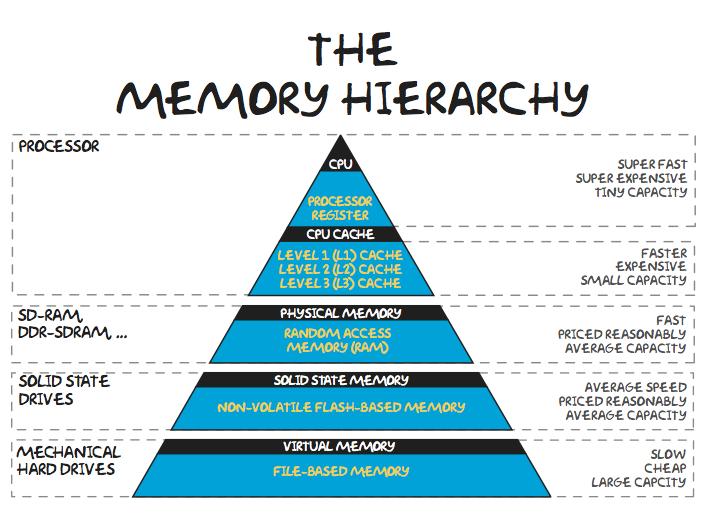

The principle of memory hierarchy constitutes the central element of computer architecture and is grounded on the length of processing, size, and costs. There are strategies of using this hierarchy in a most effective way, such as data caching, memory management, and the application of algorithms that help the computer to go to the necessary memory layers faster that include the CPU cache and then main memory. With the help of additional memory chips, so-called cache that holds frequently accessed data, it will become possible for the computer to offload secondary data from vast yet inexpensive storage unit to a slower one and so can it ensure both high speeding retrieval of data and high data-handling functionality.

Ensuring a well-organized memory hierarchy is a fundamental key to system architects, developers, and engineers who are striving to make the computational operations more efficient and overall system performance more effective.

Div:A

Kush Patel

53003230107